Walk any stamped-metal press line long enough and you'll see the same argument play out: one inspector calls a part a scratch reject, the next calls it a handling mark and lets it pass. Same defect. Two outcomes. That inconsistency is not a training problem. It's a taxonomy problem. And until you fix it, you cannot train a reliable AI vision model, because the model learns from your labels.

This guide lays out the six primary defect categories we work with on automotive stamped metal parts, the OEM specification criteria that define each one, and how AI classification models distinguish between them. We also include the PPAP mapping, because defect taxonomy only earns its keep when it feeds into a control plan that actually controls something.

Why Taxonomy Comes Before Algorithms

In our experience deploying vision systems across tier-1 body and chassis suppliers, the single biggest source of model underperformance is inconsistent ground-truth labeling. Not bad lighting. Not insufficient training data volume. Inconsistent labels.

A model trained on 10,000 images labeled by three operators with three different defect definitions will plateau well below the accuracy needed for production. Fact: we've seen models retrained on 40% fewer images, but with a consistent taxonomy enforced via written criteria, outperform the larger mislabeled dataset every time. Ground truth quality beats data quantity. Every time.

Getting taxonomy right is therefore a prerequisite, not a nice-to-have. Here is the framework we recommend.

The Six Primary Defect Categories

1. Linear Scratches

A scratch is a unidirectional, continuous groove or score mark on the part surface. It is distinguished from handling marks by its depth profile and aspect ratio. OEM acceptance criteria typically specify a maximum depth (often 0.05 mm for Class A surfaces) and a maximum length per unit area. Directionality matters: process-origin scratches tend to run parallel to the draw direction; tooling scratches are random.

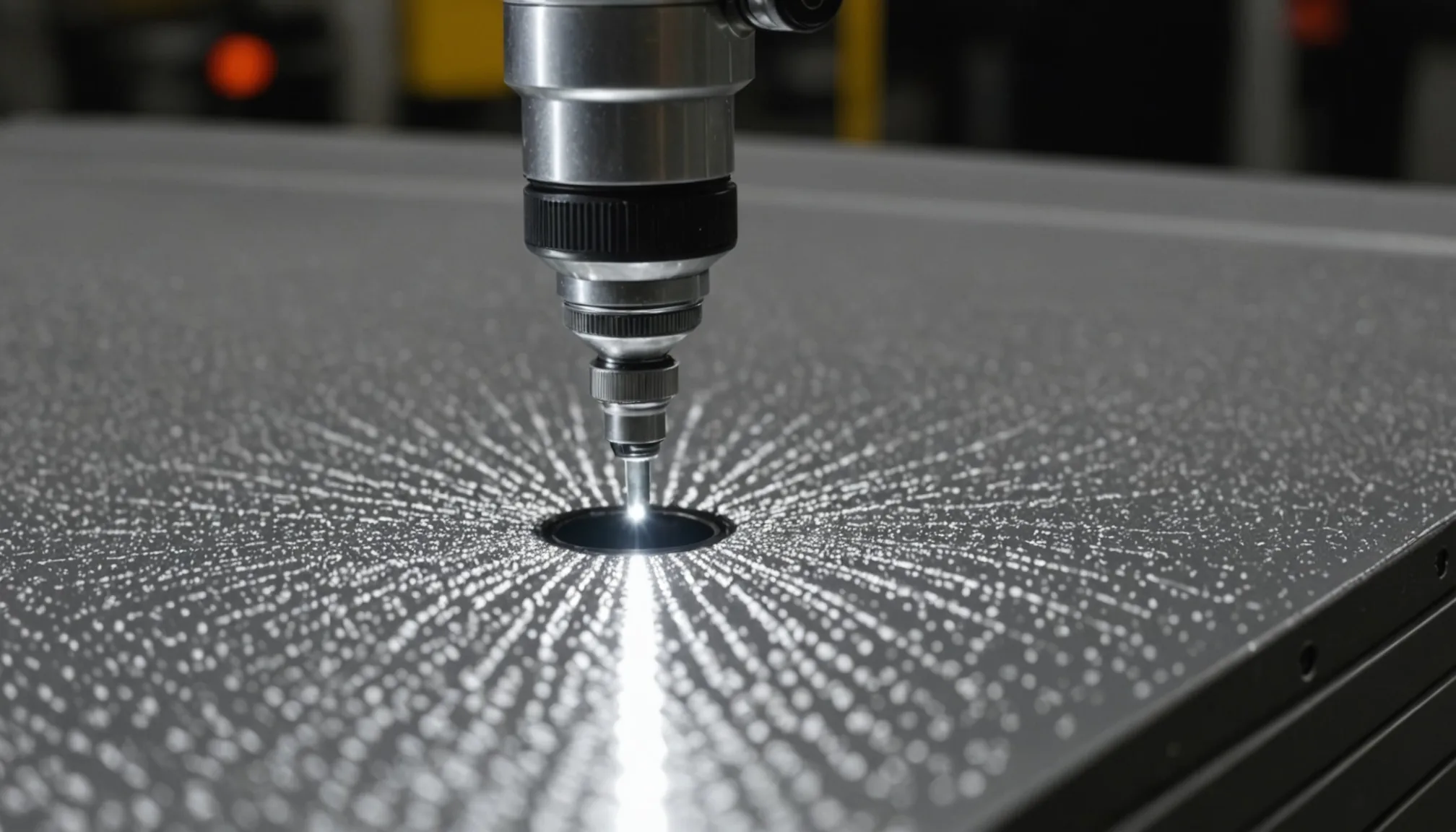

For AI classification, scratches are primarily texture features. A trained convolutional model picks up the elongated gradient signature under structured lighting. The challenge is discriminating shallow scratches from grain boundaries in the base metal, which requires consistent lighting normalization across camera setups.

2. Dents and Dings

Dents are localized plastic deformation without surface material removal. Dings are the same thing, typically smaller, often handling-origin. The OEM criterion is usually expressed as a maximum visible relief depth when illuminated at a specified angle, not as a dimensional floor.

Geometry features dominate here. AI models classify dents using photometric stereo or structured-light surface maps to detect local curvature deviation. Flat-field imaging under raking light can work at line speed, but you need consistent lamp angle across the entire field of view, within plus or minus 2 degrees, or false-positive rates climb sharply on curved panel sections.

3. Burrs

Burrs are raised material projections at cut or sheared edges. They are a direct product of tooling condition. OEM specs define a maximum height, typically 0.1 to 0.3 mm depending on assembly interface criticality, and sometimes a maximum rollover radius.

Classification models target burrs using reflectance features: a burr creates a bright specular highlight at the edge under collimated illumination that a clean edge does not. Side-lit images with dark-field illumination are the standard setup. Minimum detectable burr height at a 300 mm/s conveyor speed is approximately 0.08 mm with a 5 megapixel sensor and appropriate optics. Below that, you're in the noise floor.

4. Oil Contamination

Stamping lubricants and die release agents leave residues that appear as irregular dark patches, staining, or iridescent films. The OEM acceptance criterion is usually binary: no visible contamination on designated mating surfaces and Class A zones. On structural parts, a maximum contamination area per square decimeter may apply.

Oil residue creates distinctive texture and reflectance signatures. Under UV illumination, most stamping lubricants fluoresce, which makes detection straightforward. Without UV, standard white-light inspection can miss thin films. In our data, UV-augmented setups reduce oil-miss rate by roughly 60% compared to white-light-only on wax-based lubricants. Worth the hardware cost.

5. Edge Cracking

Edge cracks are fractures originating at a sheared or formed edge, propagating into the body of the part. They are a material or process defect, not a handling defect. This distinction matters for PPAP. OEM criteria typically require zero tolerance: no visible edge cracks on any safety or structural part regardless of size.

Classification difficulty is high. Small edge cracks (under 0.5 mm) on sheared edges are easily masked by burrs or edge breakout. AI models use high-contrast edge illumination combined with a secondary detection pass at higher magnification on flagged regions. The practical minimum detectable crack length at line speed is 0.3 to 0.4 mm with current production-grade optics, assuming the part is fixtured consistently at the inspection station.

6. Corrosion Pitting

Pitting is localized substrate corrosion, most often from moisture ingress during storage or transport. It appears as small, dark, roughly circular craters. OEM acceptance criteria reference pit diameter and pit density (number per unit area). Pitting is a storage and handling quality escape, not a press defect, so its presence in an inspection dataset is a signal worth tracking separately.

AI models classify pitting using texture features similar to scratches but with a radial rather than directional signature. The challenge at line speed is distinguishing pits from punch marks or intentional texture patterns on some structural parts. Pre-labeling confirmation with the part drawing is essential before training on this category.

Reference Table: Defect Classification at a Glance

| Defect Type | Typical OEM Criterion | Primary AI Feature | Min Detectable Size (at line speed) | Detection Challenge |

|---|---|---|---|---|

| Linear Scratch | Depth ≤0.05 mm, length limits by zone | Texture (elongated gradient) | 0.05 mm depth, 2 mm length | Grain boundary discrimination |

| Dent / Ding | Visible relief at specified angle | Geometry (local curvature) | 0.1 mm relief depth | Consistent lamp angle on curves |

| Burr | Height ≤0.1-0.3 mm by interface class | Reflectance (edge specular highlight) | 0.08 mm height | Rollover vs. burr discrimination |

| Oil Contamination | Zero on Class A / mating surfaces | Reflectance + UV fluorescence | Patch area ≥1 mm² | Thin film visibility (white-light only) |

| Edge Crack | Zero tolerance (structural parts) | High-contrast edge imaging | 0.3-0.4 mm length | Masking by burr or edge breakout |

| Corrosion Pitting | Diameter and density limits by zone | Texture (radial pattern) | 0.15 mm diameter | Confusion with intentional texture |

Mapping Defect Taxonomy to PPAP Control Plans

Here's where classification taxonomy pays off beyond model accuracy. Each defect category in your taxonomy needs to map to a specific control point in your PPAP control plan, with a defined detection method, reaction plan, and responsible party.

In our tracking of PPAP submissions for vision-inspected parts, control plans that use vague defect descriptions ("surface imperfections") get rejected or require resubmission at significantly higher rates than those using specific, measurable defect criteria tied to the six categories above. The taxonomy is also what connects your inspection system to the PFMEA severity ratings. Edge cracks on a structural part carry a severity 9 or 10 in most OEM PFMEA templates. Shallow handling scratches on a non-visible zone may be severity 3 or 4. Those ratings drive your detection control requirements, which in turn set your system specification for the AI model.

Practically: build your defect taxonomy document before you write the control plan, and before you label your first training image. The downstream time savings are substantial. We've seen programs where taxonomy-first teams reach production model sign-off 6 to 8 weeks faster than teams that labeled first and tried to reconcile definitions later.

Implementation Notes for Quality Engineers

A few things we've found matter in practice:

- Write acceptance criteria with reference images, not just words. A written depth limit is necessary but not sufficient. Labelers and operators need visual boundary samples at the limit condition.

- Version-control your taxonomy. When OEM specs change and a defect threshold shifts, every training image labeled under the old criterion needs to be flagged for review. A taxonomy that is not version-controlled creates silent dataset corruption.

- Separate defect-origin categories in your label schema. Process defects (burrs, edge cracks) and handling defects (dings, pitting) have different root cause owners and different PFMEA entries. Mixing them in a single label makes CAPA traceability painful.

- Validate minimum detectable size against your actual conveyor speed and sensor setup, not spec-sheet numbers. The figures in the table above are from our production deployments, but your optics, conveyor vibration, and part fixturing will shift those numbers.

Getting the Taxonomy Approved

One last thing. The taxonomy document itself typically requires OEM quality engineering sign-off before you can use it as the basis for an approved inspection process. Build that review into your program plan. The defect category definitions, the acceptance criteria per zone, and the minimum detectable size thresholds all need to be documented in a format that a supplier quality engineer on the customer side can review and endorse.

That approval process takes time. Start it early. In our experience, getting taxonomy alignment between your team and the OEM quality team is often the longest-lead item in a vision inspection deployment, longer than model training, longer than camera integration. It is also the work that determines whether your system actually controls what it is supposed to control.

Deploying AI visual inspection on a stamped metal line? Talk to our team about defect taxonomy setup and control plan integration.